Cuda dim3 constructor

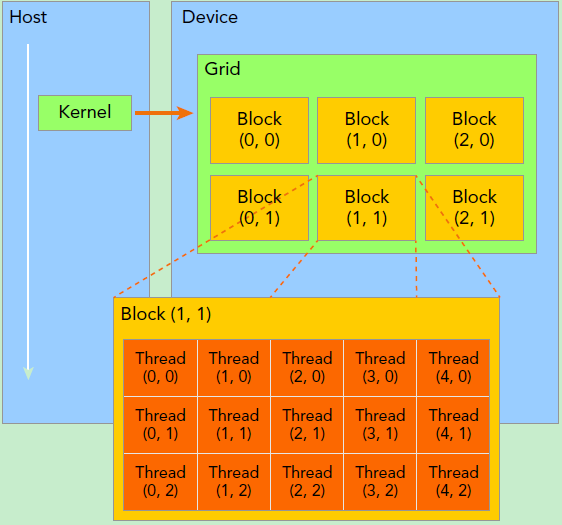

a thread’s ID, between one and 20, would be its ID inside its block). This way, the blocks also could have IDs, and the threads’ IDs would be block-wise, not global (i.e. For instance, there could be five blocks, each with 20 threads. We can also group the threads into “blocks” for better organization. There are thus 100 threads, with IDs from one to 100, and each one has been designated an expression to solve. Remember the analogy where we had 100 kids working together on 200 additions? Let’s call each student a “thread”, an entity that performs an elementary calculation like the sum of two numbers. Understanding them needs understanding: Threads, Blocks, and Kernels The only disparities would be the first line of the function and the replacement of the two fors with an if.

CUDA DIM3 CONSTRUCTOR CODE

The loop that evaluates the vector multiplication is the same as the one for CPU, and ind_out, ind_inp, & ind_weights seem extremely familiar, don’t they? As a matter of fact, if you replace row with i, col with k, and i with j, the CUDA code would appear just about indistinguishable from the C++ one. For the bias, just add the kth bias to kth column of output. With that out of the way, let’s write the forward pass itself, which is basically nothing but a matrix multiplication: Iterate through the rows i and columns k of the input and weights respectively, calculate their vector multiplication, and store the result in output. when i = 2 and j = 1, we skip the first two lines to get to row 2, then we skip the first element of row 2 to get to column 1), or just i*b + j elements, for getting the desired value. To put it another way, we skip i lines and j columns (e.g. Indexing may therefore sound knotty, but it is really not: Given a matrix m of shape a*b, element m is referring to the jth value in the ith row, where i < a and j < b (zero-based indexing). Ergo, every array, regardless of its dimensions, shall be represented as a vector throughout the rest of this series. C++ makes such arrays easy to work with, but in CUDA, dealing with higher-rank tensors is a pain compared to vectors.

Normally, we would have multidimensional arrays representing our input (of the shape bs*n_in), weights (of the shape n_in*n_out), and so on. Two CUDA or C++ programs, on the other hand, communicate nicely, thus comparing their results would be easier.įirst, we create the header for the CPU linear layer (CPU & C++ and GPU & CUDA will be interchangeable henceforth):īefore we resume, there is something to be cleared up first.

Note: Throughout the rest of these articles, we are going to implement every function or class in both pure C++ and CUDA since I) Once you implement something in pure C++, it’d be easier to write and understand its CUDA counterpart than it would’ve been had you started with CUDA right off the bat and II) Testing our CUDA code is crucial, and Python is an inefficient option since we would have to port the random elements of our network from CUDA/C++ to Python. Otherwise, I urge you to read these compendious notes to clear up any confusion you may have relating to a linear layer (they’ll be useful for the linear backward pass too.). If you are comfortable with the details behind a matrix multiplication, the following should be relatively simple.

CUDA DIM3 CONSTRUCTOR SERIES

Therefore, there could be a parent layer with three empty functions, forward, backward, and update, that the other layers could inherit for consistency (by the way, after writing this series, I better appreciate the rationale behind deep learning libraries’ design choices such as or nn.Module):Īrguably the trickiest section of this series is implementing the linear layer. Of course, this is deep learning 101, but my point in outlining the obvious is that every layer needs two methods, a forward pass and a backward pass, and some layers optionally could be updated.

Differentiate the output with respect to the input, A.K.A.Receive the input and transform it, A.K.A.On a high level, deep learning layers must perform two tasks: Without further ado, let’s get coding! Parent Layer In this article, we will write the linear forward pass, which requires delving into the CUDA programming model and learning about its memory hierarchy. Photo by Nicole King on Unsplash Introduction